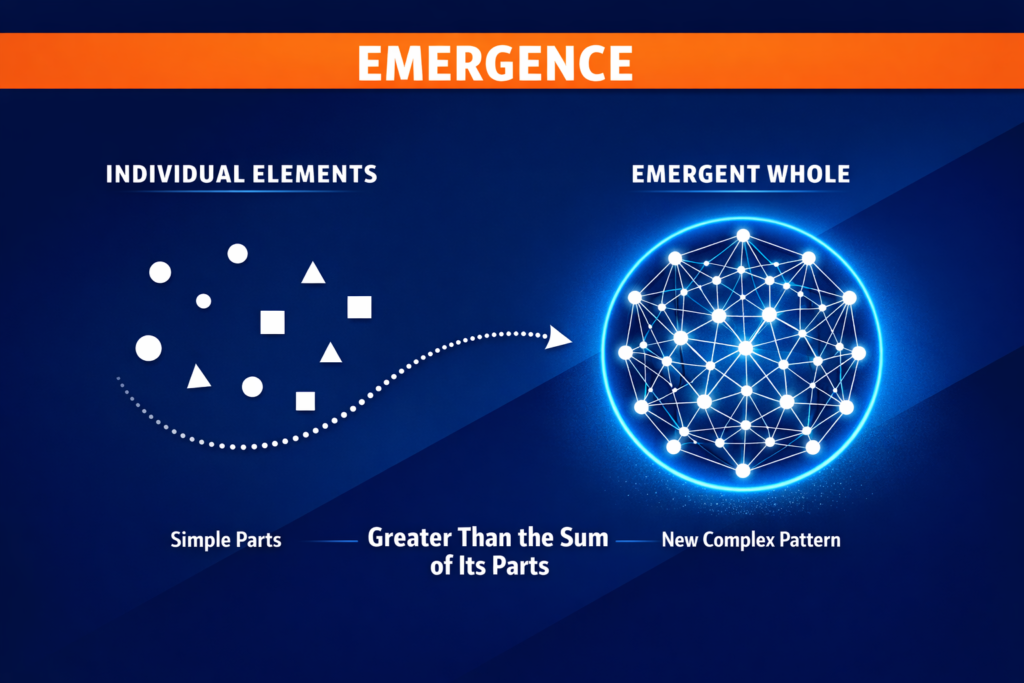

Most explanations assume a clean chain: input → output. Change something here, get a predictable result there. That works for simple setups. It breaks fast once people, timing, and interactions enter the picture. Because then you’re not dealing with a system that “responds.” You’re dealing with a system where things build on each other. That’s emergence.

It’s not one cause — it’s accumulation

Emergence is what happens when lots of small, local actions stack and interact until they produce something larger. Nothing dramatic on its own – one user hesitates a bit longer, one step in a workflow takes slightly more time, one person influences two others instead of one. Individually, irrelevant. Together, they start to bend the system. At first, nothing looks different. Then suddenly it does – a funnel that looked fine starts leaking hard, a process that felt stable starts backing up, adoption that was flat suddenly picks up. Not because of one big change. Because enough small things lined up.

Why people misread this

We usually look at end states – conversion rate dropped, queue length increased, growth slowed. Then we try to explain it backwards. But by the time you see the result, the actual process is already over. The interactions that caused it are gone. What you’re left with is a summary. And summaries are misleading in systems like this.They flatten everything: who influenced whom, when things happened, where pressure started building. So you end up with explanations that sound reasonable but don’t really explain anything.

The timing problem

One thing that keeps showing up in emergent systems is timing. Not just what happens, but when it happens. A delay early in a process doesn’t look like a problem. It’s small, almost invisible. But it shifts everything that comes after it. Things start arriving slightly out of sync. Work piles up in one place, then another. Nothing is broken. But the system is now operating differently. You won’t see that in an average. You won’t see it in a final metric. You only see it if you follow the sequence.

Why small changes don’t stay small

Another thing people underestimate is how uneven impact is. Some changes do nothing. Others flip the system. You can push on a system for a while and nothing happens. Then you cross a point and things move fast. That’s not randomness. It’s structure. There are thresholds where interactions start reinforcing each other instead of canceling out. Once you’re past that point, the system behaves differently. That’s why growth can feel “sudden.” Why overload feels like it came out of nowhere. Why fixing one small thing sometimes changes everything.

It’s not complicated — just not linear

There’s a tendency to label this as “complex,” but that’s not really the issue. The rules themselves are often simple. What’s non-intuitive is that simple rules + interaction ≠ simple outcome. You can understand every part of the system and still miss what the system will do, because the outcome depends on how those parts connect and influence each other over time. That’s the piece most explanations skip.

What actually matters

Once you look at systems this way, the focus shifts. Instead of asking:

“What’s the result?” You start asking:

- Where do interactions happen?

- Where can things accumulate?

- Where does timing start to matter?

- Where could a small change propagate?

Those questions get you closer to the actual mechanism. And once you see the mechanism, the outcome stops feeling surprising.

Why this matters in practice

This shows up everywhere, but it’s easiest to spot in things like funnels and workflows. You don’t lose users only at the step where they drop off. You lose them because of everything that happened before that point. You don’t get overload only when capacity is exceeded. You get it because timing and flow drifted out of sync earlier. You don’t get uneven growth because of one campaign. You get it because influence spreads unevenly across a network. All of these are emergent effects. You won’t fix them by tweaking one number in isolation.

The takeaway

Emergence isn’t some abstract theory. It’s just a more honest description of how systems behave when interactions matter. Things don’t fail, grow, or shift for a single reason. They get there through accumulation. If you ignore that, everything looks unpredictable. If you see it, most outcomes start to make sense. Not because they’re simple. But because you’re finally looking at the right level.